AI Security & Innovation

Symosis helps CISOs securely adopt AI by combining deep cybersecurity expertise with modern automation and governance. From AI policy to secure LLM workflows, we deliver the controls, tooling, and visibility today’s enterprises need.

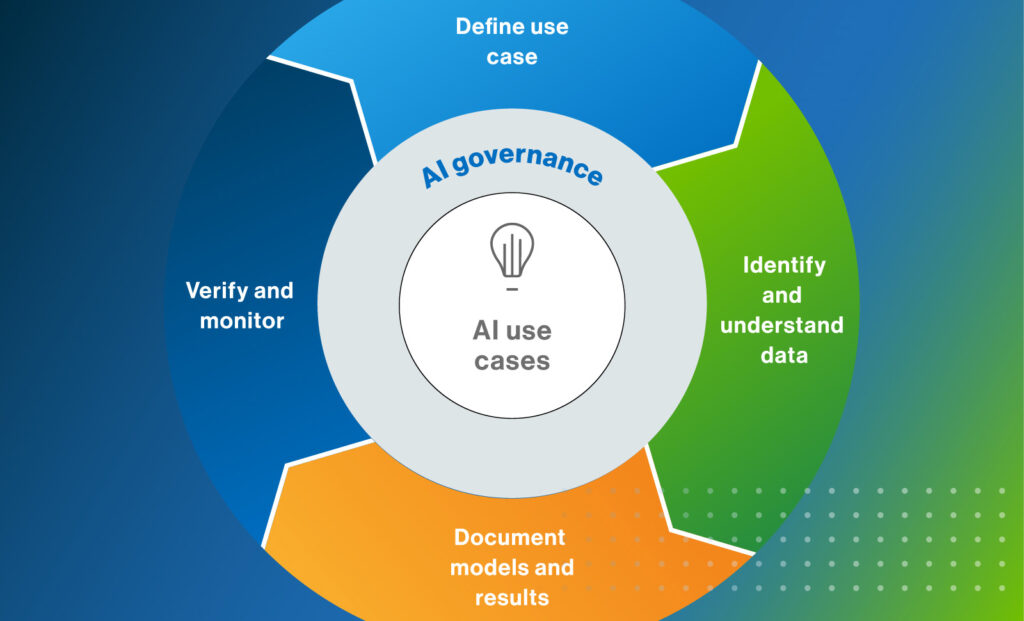

AI Policy & Governance Development

The Problem:

Organizations are deploying GenAI tools faster than they can govern them, leading to shadow AI use, data leakage, and regulatory risk. Most lack clear policies, approval workflows, or governance models.

Our Approach:

Symosis works with your Legal, Risk, Cyber, and Data teams to create a unified governance framework for AI. We deliver:

AI Acceptable Use and Shadow AI controls

Use case risk assessments

Policies aligned to NIST AI RMF, ISO 42001, and the EU AI Act

Governance Committee and approval workflows

How It Helps:

You get centralized control over how AI is adopted and used across the org—reducing risk, enabling innovation, and meeting regulatory expectations.

AI Security Tooling & Integration

The Problem:

Security teams are overwhelmed by alerts and repetitive triage tasks. GenAI tools can help, but out-of-the-box copilots often lack context, guardrails, or integration with SecOps workflows.

Our Approach:

Symosis builds and integrates secure, auditable copilots and LLM workflows using:

Microsoft 365 Copilot, Azure OpenAI, or custom GPTs

Embedded ServiceNow/Jira integrations

Role-based access, logging, and prompt guardrails

How It Helps:

Faster triage, fewer false positives, and consistent responses—without exposing sensitive data or introducing new risks.

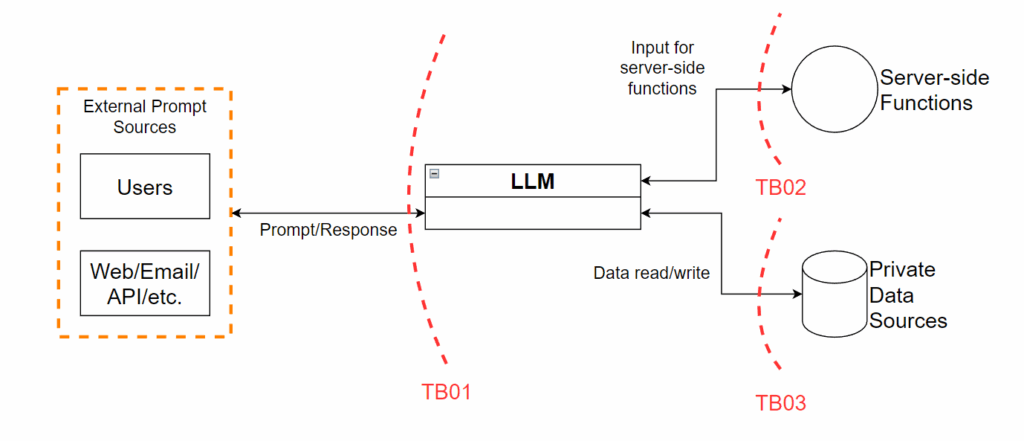

LLM Threat Modeling & Risk Assessments

The Problem:

Most teams don’t understand the risks of LLMs until it’s too late—prompt injection, RAG data leakage, or agent overreach can go undetected without proper modeling.

Our Approach:

We perform AI-specific threat modeling using:

OWASP LLM Top 10 and STRIDE

Architecture reviews of embeddings, vector stores, agents

Controlled prompt testing and misuse scenarios

Risk scoring and mitigation roadmaps

How It Helps:

You get a clear understanding of risks before going live, plus actionable steps to make your LLM implementation secure by design.

SSPM for AI Tools

The Problem:

AI-powered SaaS tools like GitHub Copilot, Grammarly, and OpenAI often bypass standard security reviews, leaving blind spots in posture management.

Our Approach:

Symosis helps integrate these apps into your SaaS Security Posture Management (SSPM) platform by:

Custom API ingestion and data mapping

Creating posture rules for AI-specific risks (e.g., data retention, account exposure)

Continuous scoring and policy enforcement via Adaptive Shield, Obsidian, or DoControl

How It Helps:

Gives you real-time visibility and control over AI SaaS tools—just like your email or cloud platforms.

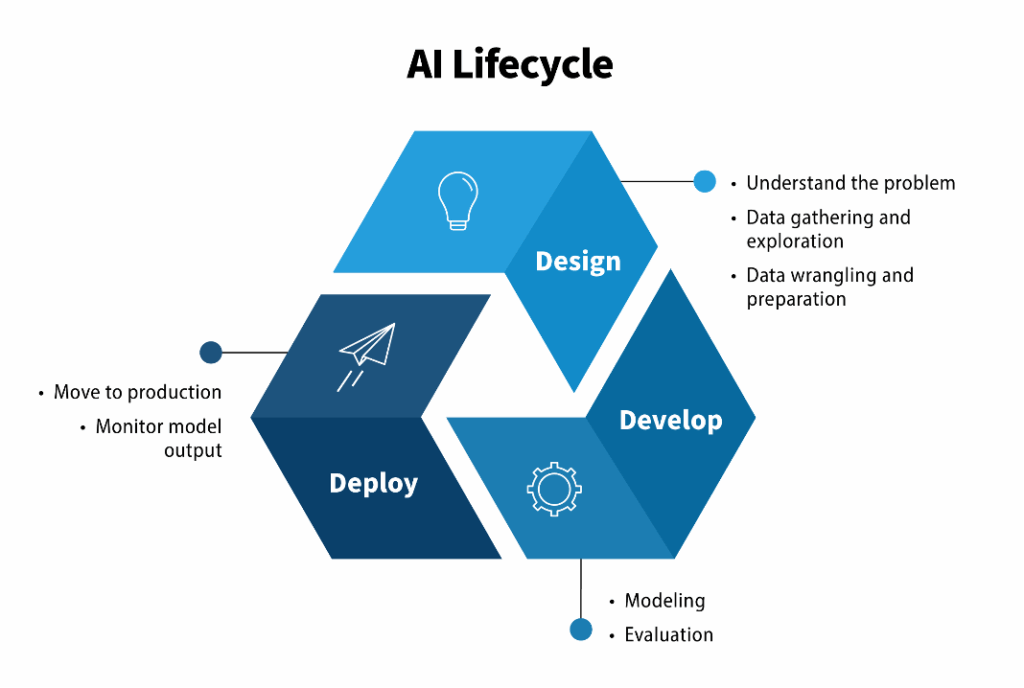

AI Model & Agent Lifecycle Security

The Problem:

Internal LLMs and autonomous agents introduce new risks across training, deployment, and monitoring—often outside existing AppSec programs.

Our Approach:

We secure your AI lifecycle by:

Locking down model access via API authentication and RBAC

Integrating model review into CI/CD

Monitoring for drift, hallucinations, or misuse

Ensuring traceability from prompt to response

How It Helps:

Prevents unauthorized use, model corruption, and drift. Enables AI adoption without compromising security.

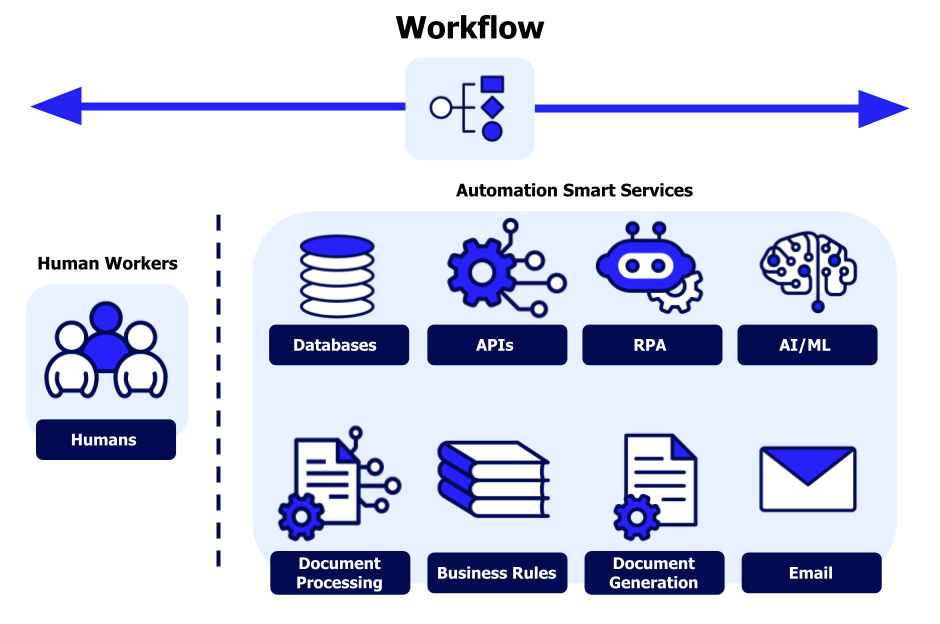

Custom AI Workflow Automation

The Problem:

Security and GRC teams rely on manual playbooks, fragmented tools, and slow reviews—leading to delays, fatigue, and audit gaps.

Our Approach:

Symosis builds intelligent, LLM-powered workflows for:

Risk reviews and evidence collection

SOC alert triage and summarization

Playbook generation and policy drafting

Delivered via tools like ServiceNow, Jira, Smartsheet, or custom apps.

How It Helps:

Cuts response time, improves accuracy, and reduces dependency on manual reviews—without sacrificing transparency or oversight.